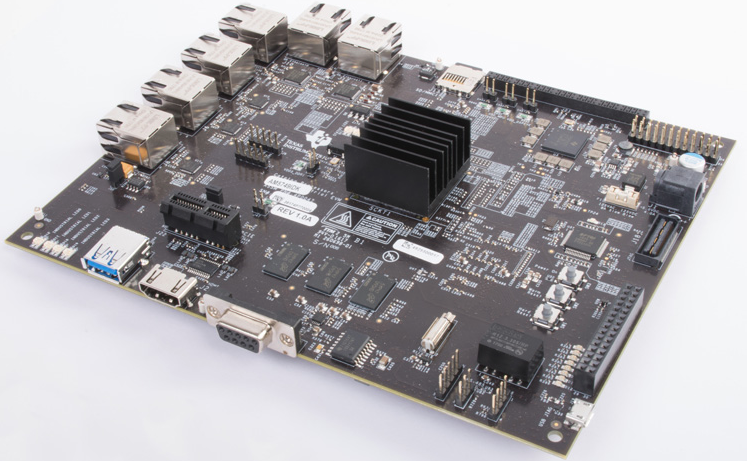

This reference design demonstrates how to use TI Deep Learning (TIDL)/Machine Learning on a Sitara AM57x System-on-Chip (SoC) to bring deep learning inference to an embedded application. This design shows how to run deep learning inference on either C66x DSP cores (available in all AM57x SoCs) and Embedded Vision Engine (EVE) subsystems, which are treated as black boxed deep learning accelerators on the AM5749 SoC.

This reference design is applicable to any application that is looking to bring deep learning/machine learning inference into an embedded application. Customers looking to quickly get started with a deep learning network or to evaluate their own networks performance on an AM57x device will find a step-by-step guide on how to use TIDL available as part of TI’s free AM57x Processor SDK.

Features

- Embedded deep learning inference on AM57x SoC

- Performance scalable TI deep learning library (TIDL library) on AM57x using C66x cores only, EVE subsystems only, or C66x + EVE combination

- Performance optimized reference CNN models for object classification, detection and pixel-level semantic segmentation

- Full walk-through of TIDL development flow: training, import and deployment

- Benchmarks of several popular deep learning networks on AM5749

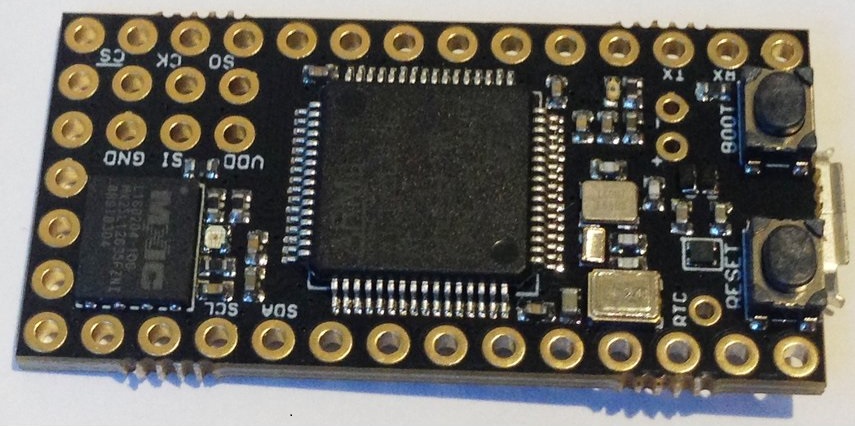

- This reference design is tested on AM5749 IDK EVM and includes TIDL library on C66x core and EVE subsystem, reference CNN models and getting started guide

more information on : www.ti.com