Tag: Self-learning

ScienceTechnology

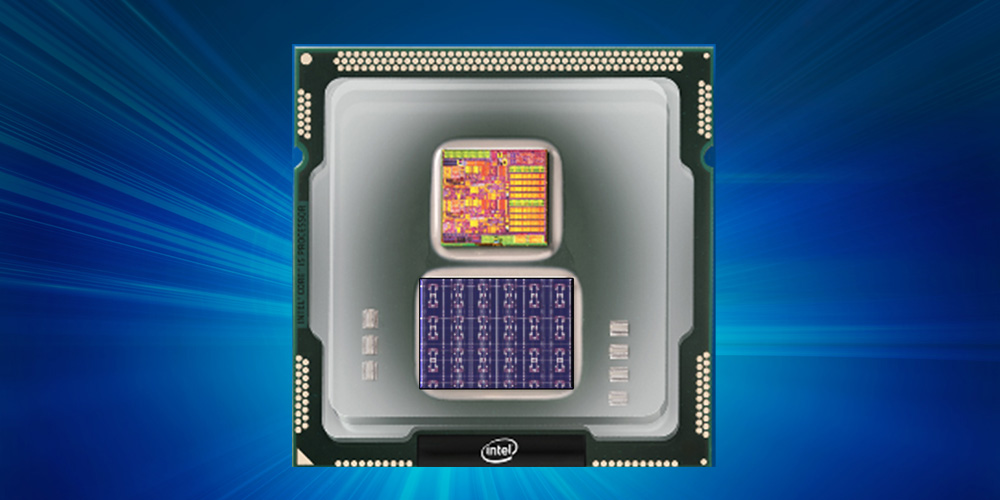

Intel Introduces Loihi – A Self Learning Processor That Mimics Brain Functions

Intel has developed a first-of-its-kind self-learning neuromorphic chip – codenamed Loihi. It mimics the animal brain functions by learning to operate based on various modes of feedback from the environment. Unlike convolutional neural network (CNN) and other deep learning processors,...

Continue Reading