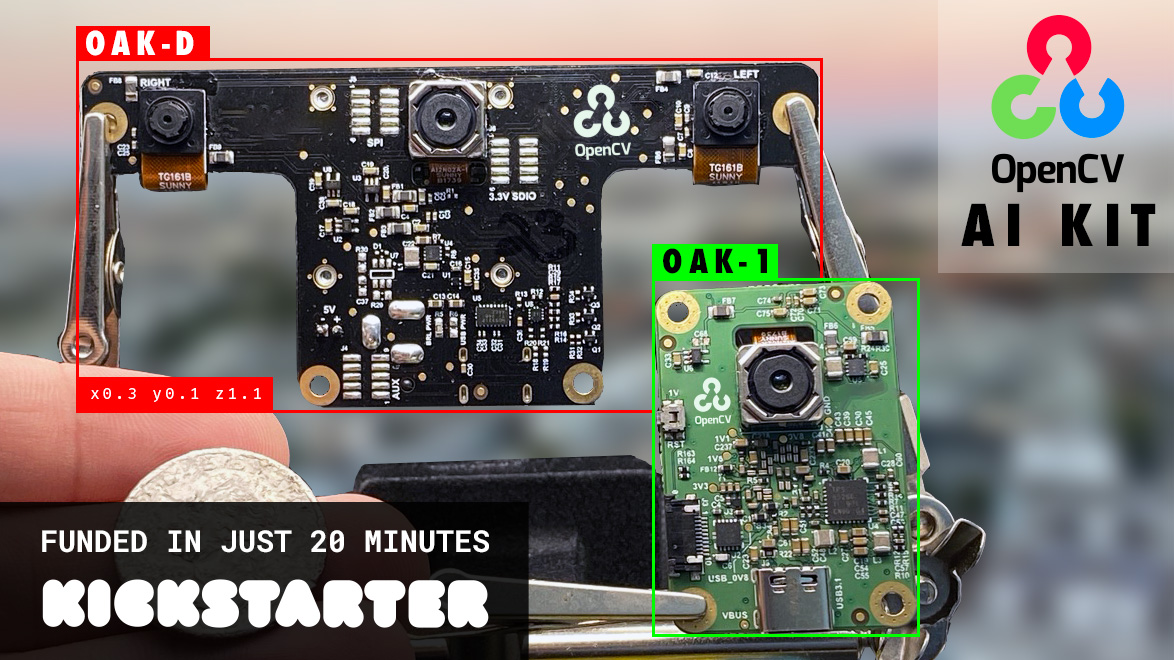

OpenCV has announced their AI Kit called OAK on Kickstarter. It is an MIT-licensed open-source software and Myriad X-based hardware solution for computer vision at any scale. OAK incorporates the OAK API software and two different types of hardware namely OAK-1, a, and OAK-D. The hardware is tiny artificial intelligence (AI) and computer vision (CV) powerhouses, with OAK-D enabling spatial AI leveraging stereo depth in addition to the 4K/30 12MP camera that both models share. With a clock rate of under 30 seconds, the OAK-1 and OAK-D allow anyone to access all the features mentioned above.

If you want to get started in spatial AI, the OAK API is the fastest way, due to the OAK-1 module’s single USB-C connector which provides it with both data and power. The hardware is easy to use, from unboxing the hardware to running an advanced image classifier, it takes less than one minute. OAK ships with neural nets covering which can detect: Faces with mask/no-mask, Age recognition, Emotions recognition, Face detection, Facial Landmark (corners of eyes, mouth, chin, etc.), General object detection (20-class), Pedestrian Detection, and Vehicle detection.

The OAK-1 and OAK-D offer support for Linux, macOS, and Windows hosts. This makes it versatile enough for any prototyping flow no matter the size. You can arrange computer vision + AI workflows with Pipeline Builder, which is a node-based editor that includes previews to ensure that your crops and zooms are passing the right data on to the next step in the process. The hardware offers you feature tracking, hardware-level H.265 support, and 4k output to be used at any time. There are two methods of using the OAK-D to get Spatial AI results, which include Monocular Neural Inference fused with Stereo Depth and Stereo Neural Inference. Both modes have advantages and disadvantages for specific use cases, which will be discussed.

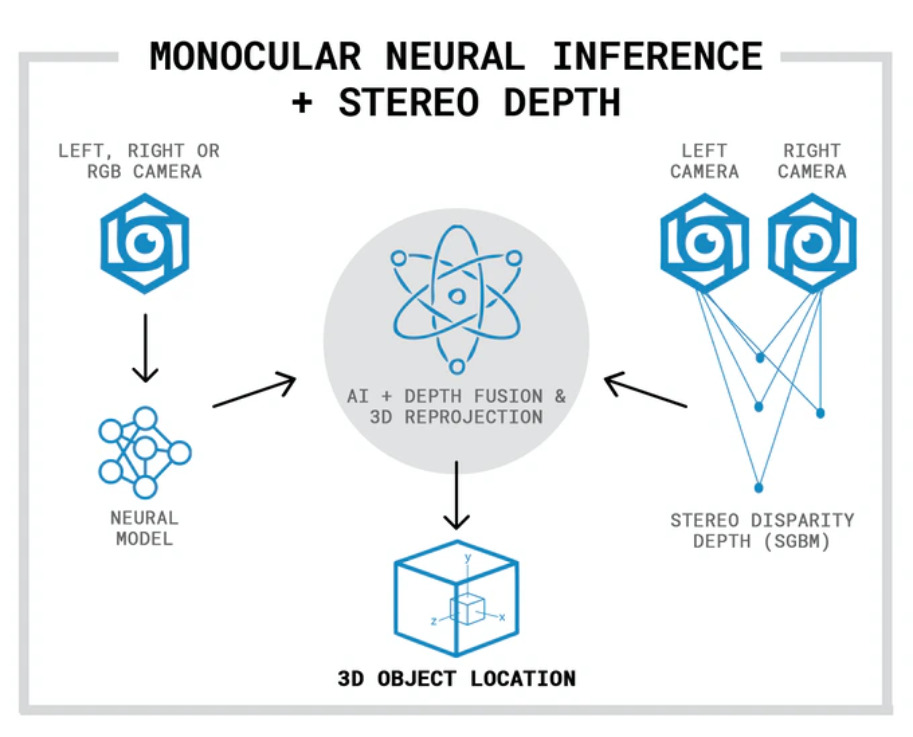

The Monocular Neural Inference fused with Stereo Depth neural network is run on a single camera and fused with disparity depth results, with the left, right, or RGB camera used to run the neural inference.

![]()

In the image above. we see an example of monocular AI fused with Stereo Depth results. Here, the OAK-D is visualizing the object detection results onto the depth data. The bounding box in the middle of the object is where the depth for the object is being pulled (this is configurable in the API).

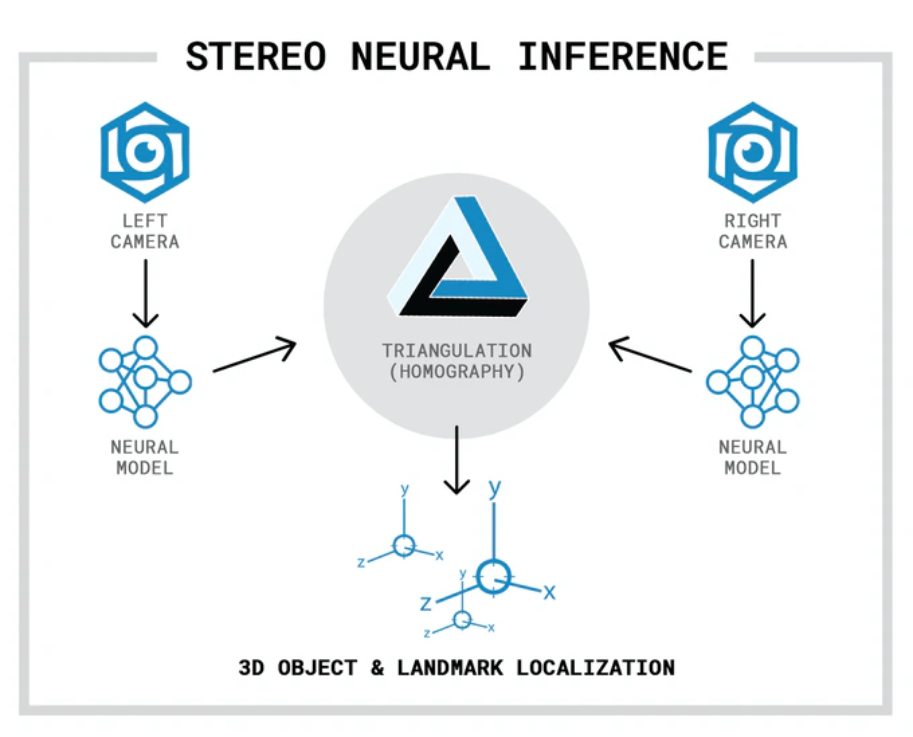

When using the Stereo Neural Inference the neural network runs in parallel on OAK-D’s left and right cameras to create 3D position data directly with the neural network. Irrespective of the camera being used, standard neural networks can be used. There is no need for the neural networks to be trained with 3D data because the OAK-D automatically provides the 3D results in both cases using standard 2D-trained networks. The image below is an example of this stereo neural inference running on OAK-D. In this case face detection followed by facial landmark detection running in parallel on both the left and right cameras.

![]()

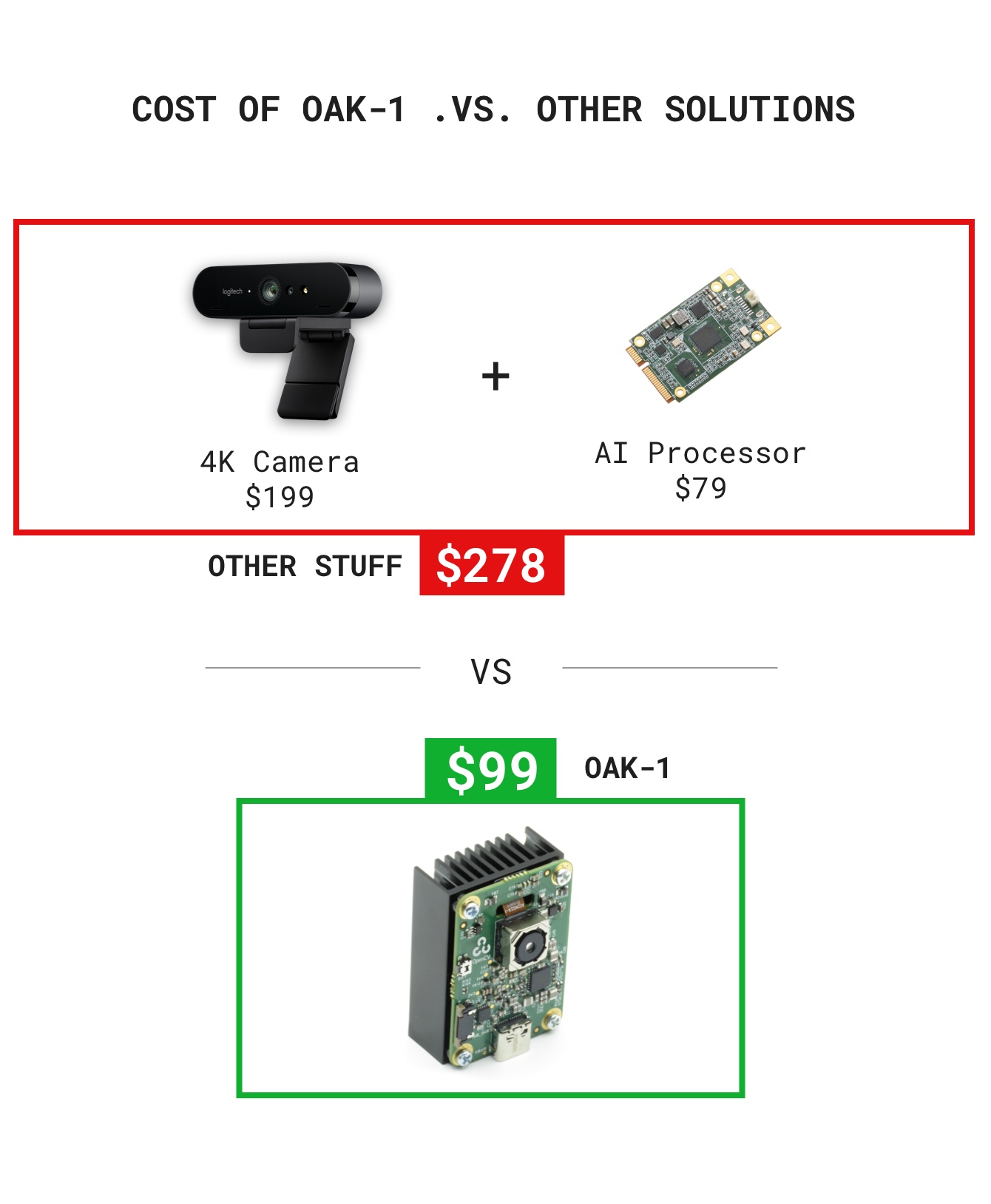

Once you’re done tinkering, OAK’s modular, FCC/CE-approved, open-source hardware ecosystem enables direct integration into your products. Both OAK modules are available in 3 packs and 10 packs. Backers can add additional modules, and other add-ons, to their pledge in the post-campaign backer management system. The major benefit of OAK is that it provides in a single, cohesive solution what would otherwise require cobbling together different hardware and software components.

Shipping, duty, and VAT will be collected separately post-campaign. This does not include a Raspberry Pi. Due to the global pandemic which has affected the prices of goods and services, shipping prices may fluctuate within a few US dollars. There won’t be shipping to any countries restricted by the US Government, with other shipping restrictions apply. All OAK units will be shipped by December 2020, with post-campaign fulfillment being handled by a third-party service, where backers will be able to add multiple OAK devices and add-ons in addition to their pledge. The project will only be funded if it reaches its goal by Thu, August 13 2020 1:01 PM UTC +00:00.

For more information, visit the product announcement page on Kickstarter.