Tag: neural network

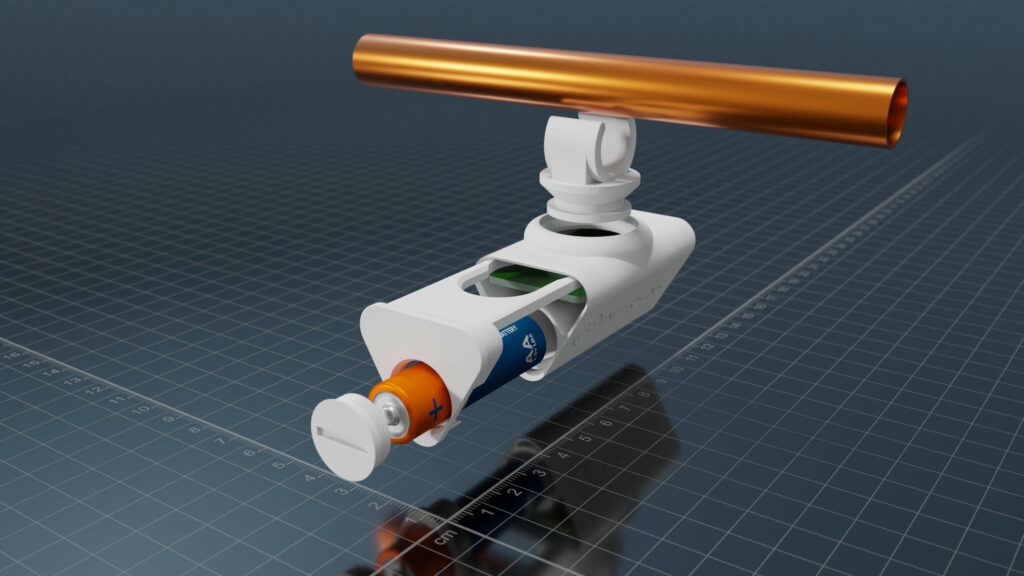

InferSens launched a battery-powered sensor with a neural decision processor for industrial applications

British on-device deep learning hardware platform manufacturer InferSens has launched a battery-powered ultra-low-power edge deep learning sensor for commercial and industrial applications. The hardware platform uses Syntiant’s NDP120 neural decision processor to enable highly...

Continue Reading

Synopsys announces a neural processing unit IP and software toolchain

To address the increasing demands of artificial intelligence applications deployed at the edge, Synopsys has announced a neural processing unit IP to deliver high performance and support the most advanced neural network models. Overall, the new DesignWare ARC NPX6 NPU IP delivers up to...

Continue Reading

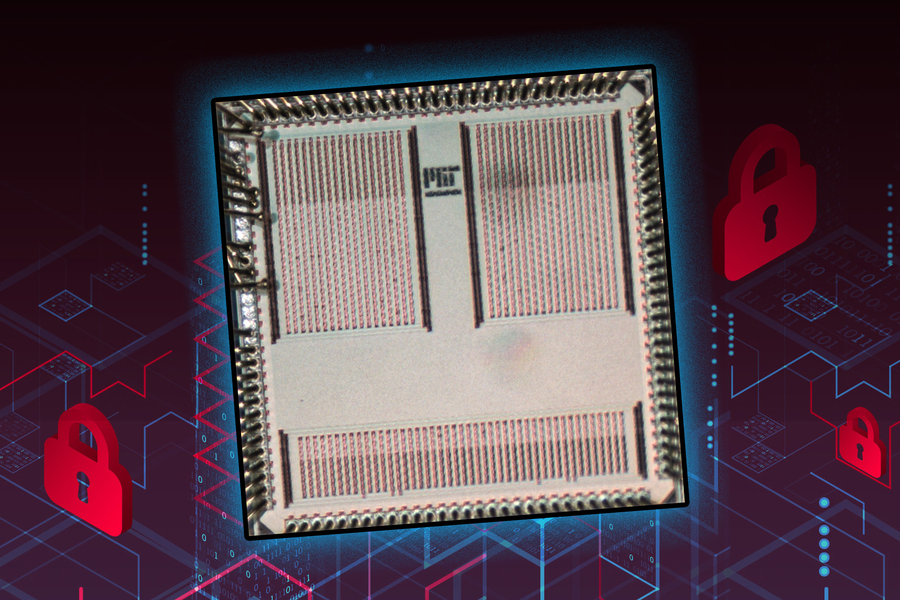

MIT Researchers Develop An ASIC To Defend Against Power-based Side Channel Attacks

A team of researchers affiliated with MIT School of Engineering, Indian Institute of Science, and Analog Devices have collaborated to design a tiny application-specific integrated circuit (ASIC) to defend against power-based side-channel attacks on an IoT device. Before getting into the...

Continue Reading

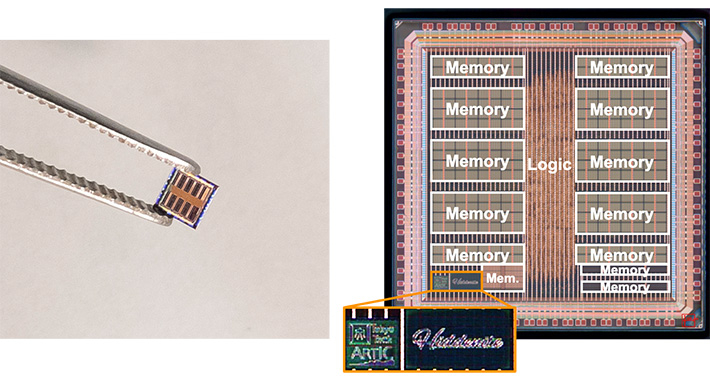

Hiddenite, AI Processor for Reduced Computational Power Consumption

AI accelerators are specialized hardware designs that are built for computing complex AI workloads in the field of edge computing. While deep neural networks are assumed to be the optimized solution for image recognition and object detection, AI tasks, a group of researchers from the...

Continue Reading

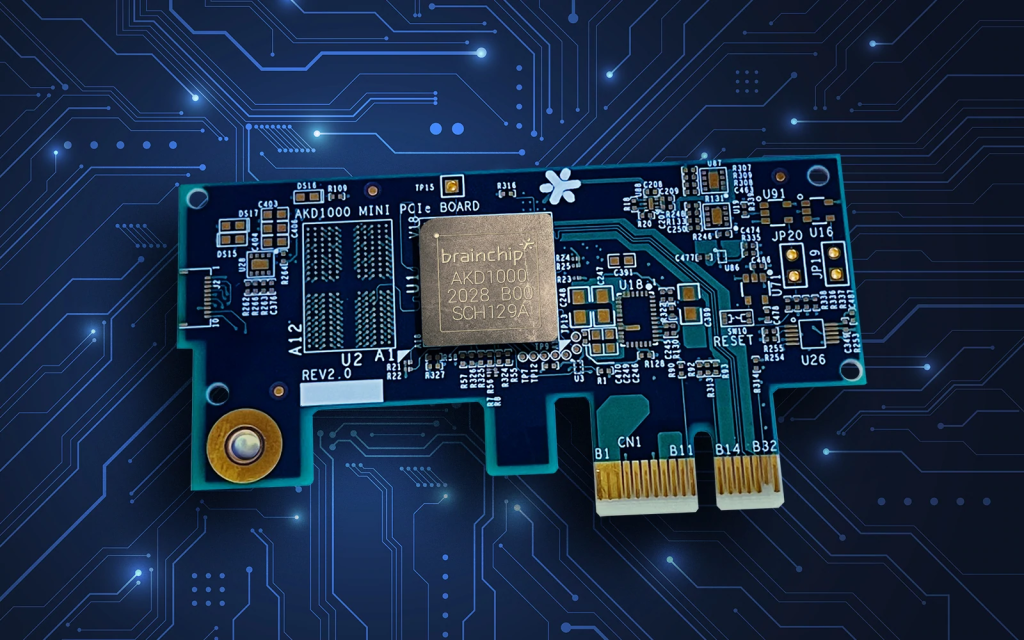

First mini PCIexpress board with spiking neural network chip

PCIe board based on an advanced neural networking processor enables AI training and learning on the device itself, without dependency on the cloud. [via] Brainchip has begun taking orders for the first commercially available Mini PCIe board using its Akida advanced neural networking...

Continue Reading

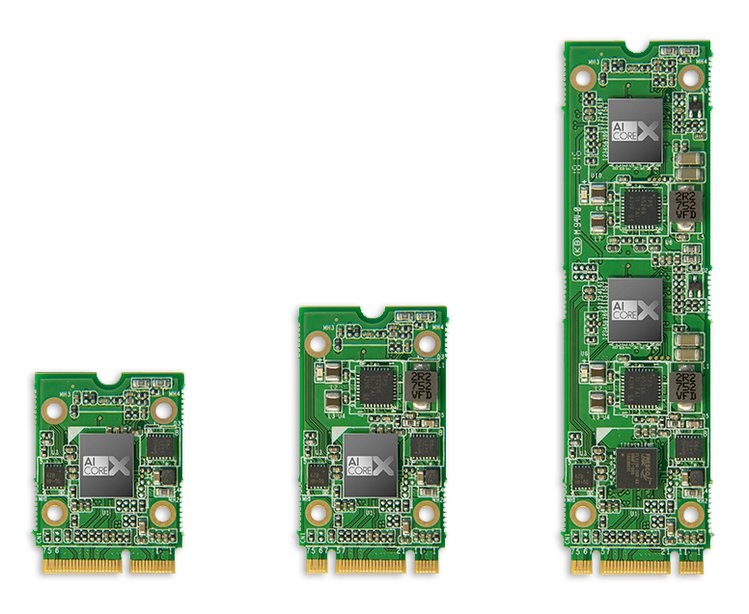

UP AI CORE X – Neural network accelerator for AI

Neural network accelerator for AI on the edge. Up to four trillion ops/sec with just a few watts. Neural Networks are the future of AI and these boards comes into play to help you develop your intelligent visual system with ease. UP AI CORE X is a complete product line of neural...

Continue Reading

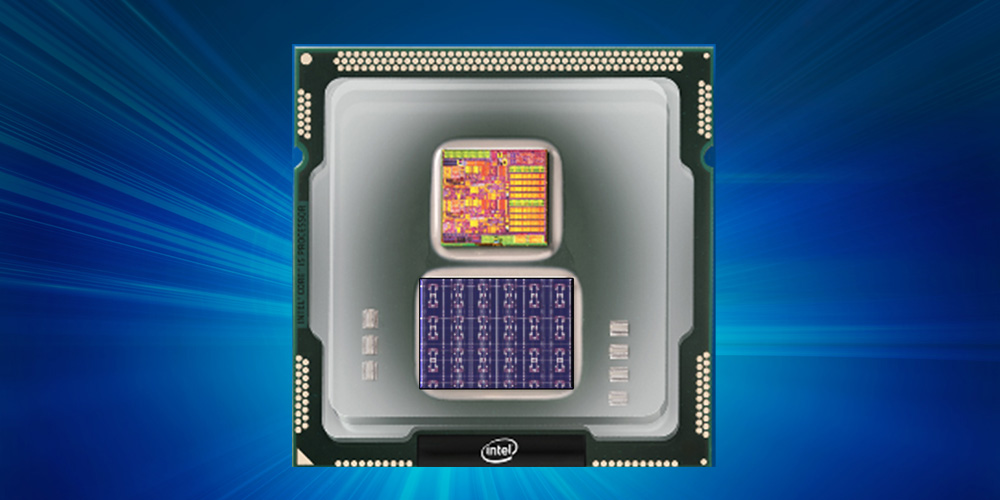

Intel Introduces Loihi – A Self Learning Processor That Mimics Brain Functions

Intel has developed a first-of-its-kind self-learning neuromorphic chip – codenamed Loihi. It mimics the animal brain functions by learning to operate based on various modes of feedback from the environment. Unlike convolutional neural network (CNN) and other deep learning processors,...

Continue Reading

Role Of Vision Processing With Artificial Neural Networks In Autonomous Driving

In next 10 years, the automotive industry will bring more change than we have seen in the last 50, due to technological advancement. One of the largest changes will be the move to autonomous vehicles, usually known as the self-driving car. Scientists from many universities are striving to...

Continue Reading