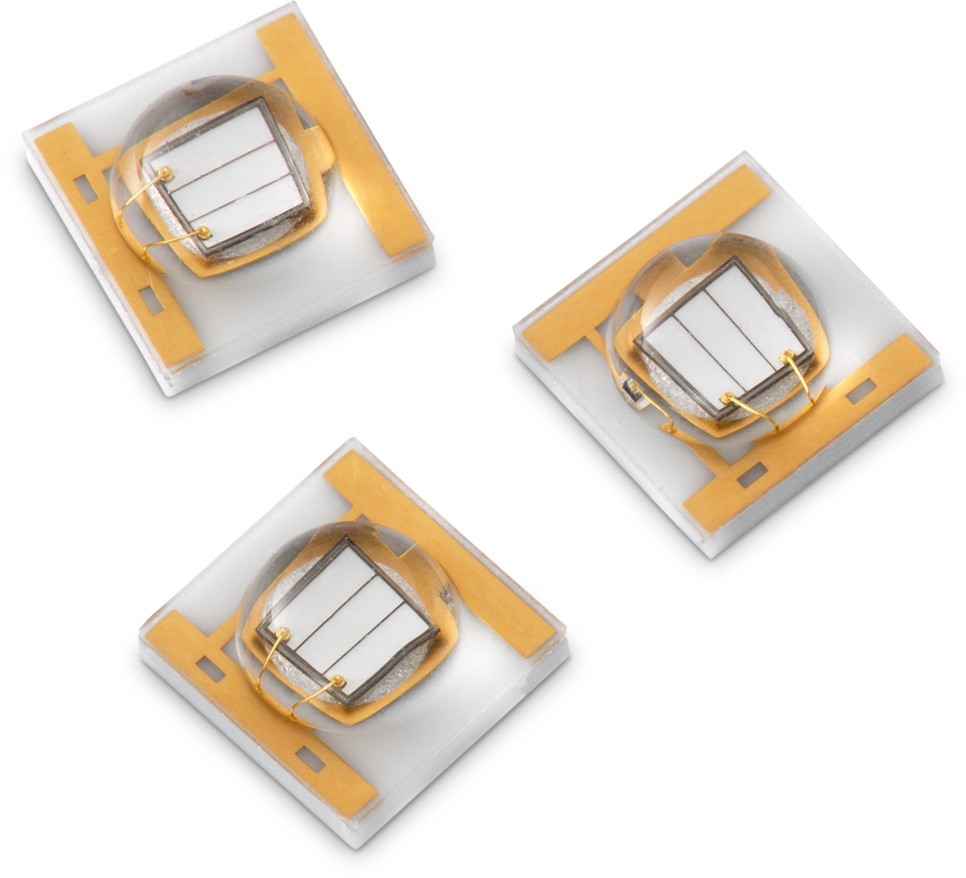

Onion Corporation, the company best known for its computing and IoT connectivity devices is currently crowdfunding their LiDAR depth camera system named the Onion Tau. Onion Tau Camera is a 3D depth-sensing camera. It is capable of producing 3D depth data rather than color frames.

The company claims it to be a USB-based and plug-and-play 3D camera. It requires no additional computation to provide depth data. Plugging it into a computer with a USB cable is more than enough for it to start sending depth data to the computer via USB-C. The Tau holds the promise to become an affordable solution for the level beyond single-point depth sensing or one-dimensional scanning.

The LiDAR-style time-of-flight sensor in the camera outputs real-time 160 x 60 resolution depth data. It can also output a greyscale image of the scene. Most importantly, the range for depth sensing is 0.1 m to 7 m at a frame rate of 30fps. For real-time processing, Onion has promised a web-based application for viewing the point cloud along with a Python library and OpenCV-compatible application programming interface — both of which will be open source.

Specifications

- Depth Technology: LiDAR Time of Flight

- Depth Stream Output Resolution: 160 x 60

- Depth Stream Output Frame Rate: 30 fps

- Minimum Depth Distance: 0.1 meters

- Maximum Range: 7 meters

- Depth Field of View (FOV): 70° x 20°

- Connector: USB-C

- Tau Camera: 4 mounting holes

Use Cases

- Primary use case:

- Use the bundled viewer to see the depth data rendered on your computer

- Explore 3D depth mapping and it’s possibilities

- Secondary use case:

- Use the Python API to build custom made applications

- Compatible with OpenCV

- Use cases this enables:

-

- Environment mapping (like SLAM)

- Augmented Reality

-

- Computer vision applications:

-

-

- Person counting/presence detection

- Object detection

- Robotics

-

- Automation

-

The way LiDAR cameras are getting mainstreamed, it is a very high probability that they will soon take over the “smart” devices universe. Currently, on CrowdSupply, Onion’s Tau Camera is looking forward to achieving that goal.